Introduction

In 2025, A/B testing remains a fundamental pillar of conversion rate optimization, enabling marketers to make data-driven decisions that improve user experience and maximize revenue. With digital competition at an all-time high, small optimizations—like refining a CTA button or tweaking a headline—can lead to significant uplifts in conversions. As personalization and AI-driven automation become more sophisticated, A/B testing strategies must evolve to incorporate predictive insights while maintaining a rigorous, structured approach to experimentation. The businesses that continuously test, iterate, and optimize will outperform those that rely on static, one-size-fits-all solutions.

Yet, many organizations still fall into the trap of making gut-driven marketing decisions rather than letting real user behavior guide them. A well-designed A/B test removes bias, helping businesses identify what truly resonates with their audience. Without structured A/B testing tools and methodologies, marketers risk implementing changes that seem promising but ultimately hurt engagement, conversions, or revenue. In an era where personalization and data-driven marketing are key to success, relying on assumptions is not just inefficient—it’s costly.

This guide will walk you through a step-by-step framework for building an A/B testing strategy in 2025. From understanding different types of tests to setting up meaningful experiments and analyzing results, you’ll gain actionable insights to refine your approach. Whether you’re optimizing landing pages, email campaigns, or ad creatives, this guide will help you make confident, data-backed decisions that drive sustained growth.

What is A/B Testing and why does it matter?

At its core, A/B testing is a controlled experiment that compares two variations of a webpage, email, ad, or app to determine which one performs better. By splitting traffic between the two versions—A (the control) and B (the variation)—marketers can measure the impact of changes on key performance metrics like conversion rate, engagement, and revenue.

A/B testing is the foundation of data-driven marketing because it eliminates guesswork and validates decisions with real user behavior. Instead of making subjective changes based on assumptions, businesses can rely on empirical data to refine messaging, design, and functionality. This structured approach not only enhances user experience but also ensures that optimizations align with audience preferences.

Moreover, continuous A/B testing compounds improvements over time, leading to incremental growth. A single test might yield a minor uplift, but a well-executed A/B testing strategy can generate sustained performance gains. Companies that embrace experimentation as an ongoing process—rather than a one-time initiative—stay ahead in competitive markets.

Types of A/B Testing

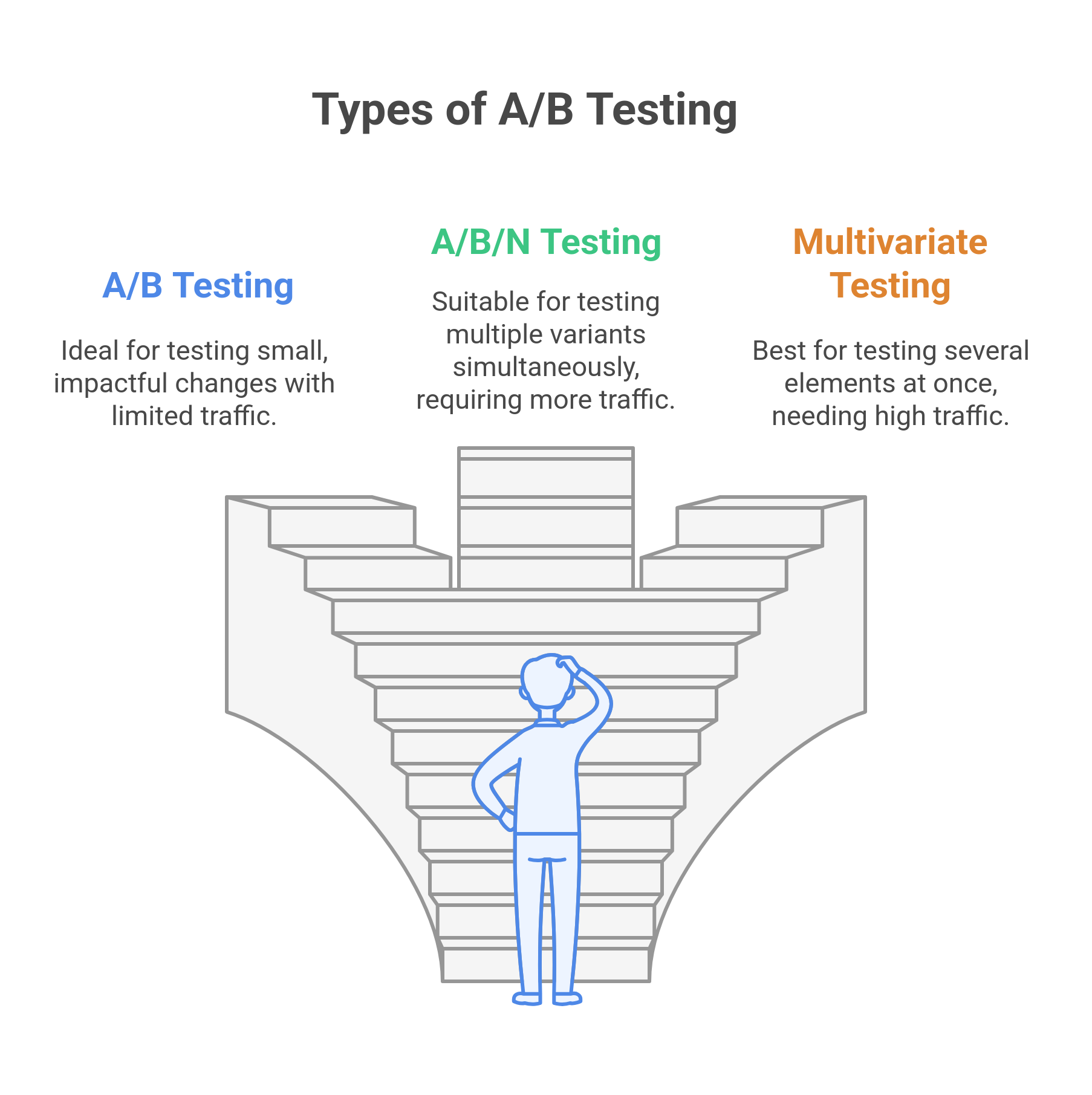

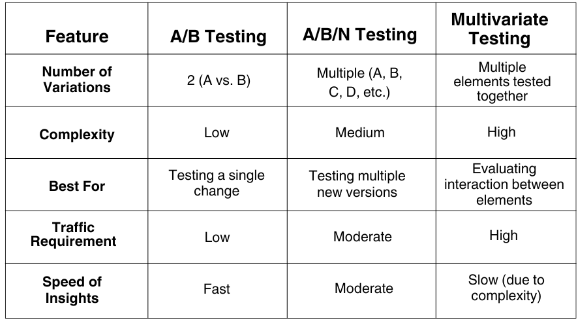

Not all A/B tests are the same. Depending on the complexity of the experiment and the number of variations being tested, different testing methodologies come into play. Here are the three primary types of A/B testing:

- A/B Testing (Split Testing)

- The most common form of experimentation. It compares two versions (A and B) of a webpage, ad, or email to determine which one yields better results.

- Typically used for testing single variables like headlines, CTA buttons, or images.

- Ideal for straightforward experiments where one element is changed at a time.

- A/B/N Testing

- Expands on A/B testing by allowing multiple variations (A, B, C, D, etc.) to be tested simultaneously.

- Useful when testing multiple new ideas at once instead of running sequential A/B tests.

- Requires more traffic to achieve statistical significance due to the multiple variations.

- Multivariate Testing (MVT)

- Tests multiple elements of a page simultaneously to identify the best combination.

- Instead of testing just one variable, MVT evaluates how different design and content elements interact.

- Ideal for websites with high traffic, as MVT requires more data to reach reliable conclusions.

Key Differences Between A/B, A/B/N, and Multivariate Testing

Each method serves a different purpose in conversion rate optimization. A/B testing is ideal for quick, iterative improvements, A/B/N testing allows for broader experimentation, and multivariate testing helps uncover the most effective combinations of elements. Choosing the right approach depends on factors like website traffic, test complexity, and business objectives.

How Does A/B Testing Work?

A/B testing is a structured approach to experimentation, ensuring that changes to a website, app, or marketing campaign are data-driven rather than based on assumptions. Running a successful A/B test requires a systematic process, from defining a goal to analyzing results. Below, we break down each step in detail.

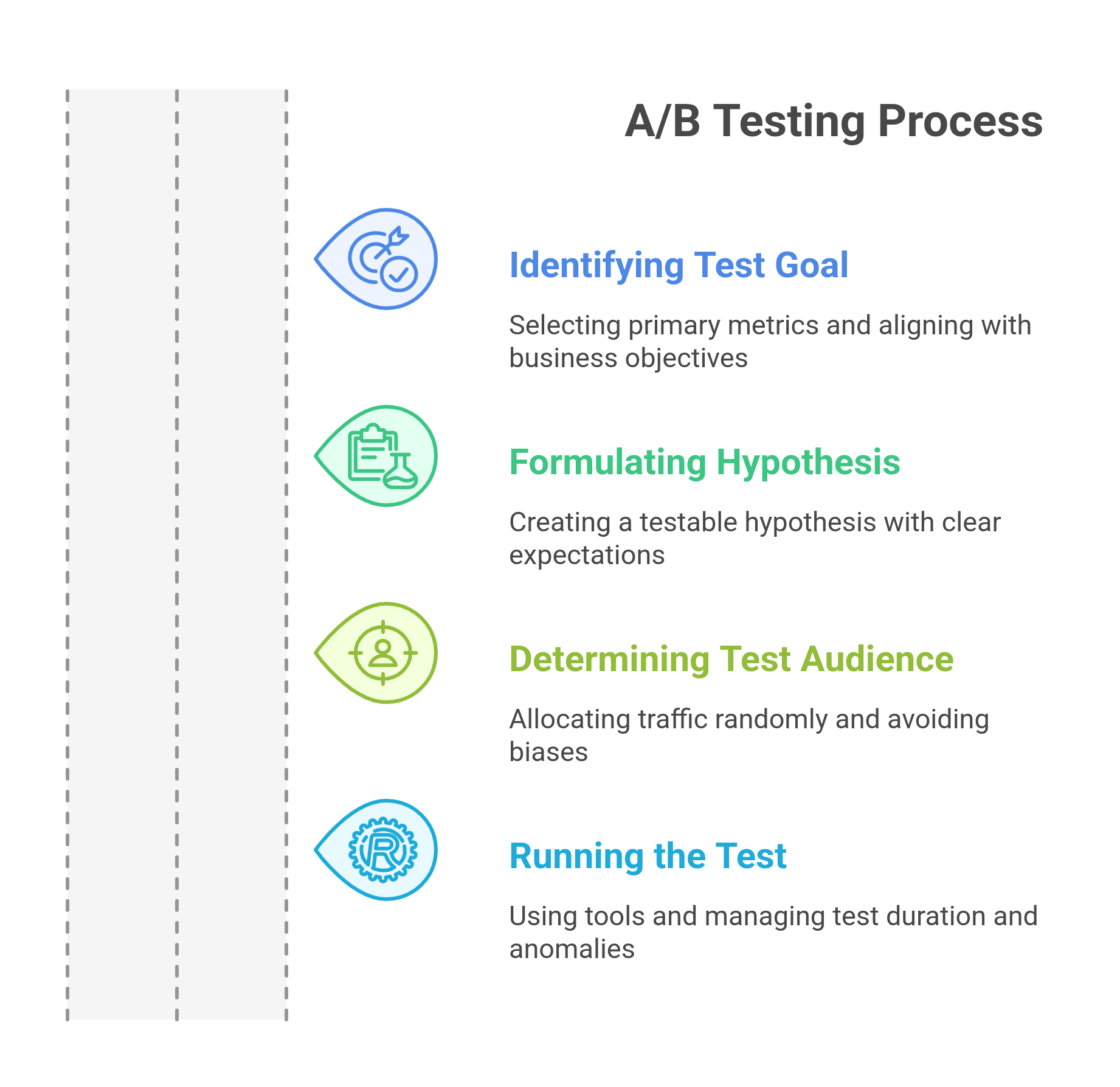

Step 1: Identifying the Goal of Your Test

Every A/B test must start with a well-defined goal. Without a clear objective, test results may be inconclusive or fail to deliver actionable insights. The goal should focus on a single primary metric that reflects the impact of a change. Some common A/B testing goals include:

- Improving conversion rates – Increasing form submissions, demo requests, or purchases.

- Boosting click-through rates (CTR) – Getting more users to engage with a call-to-action (CTA) button, email link, or ad.

- Reducing bounce rates – Encouraging users to stay on a page longer and explore more content.

- Increasing revenue per visitor (RPV) – Optimizing pricing strategies, discounts, or checkout flows.

- Enhancing user engagement – Measuring metrics such as time on page, scroll depth, or interaction rates.

To align an A/B testing strategy with broader business objectives, consider the following examples:

- If the company’s goal is to increase lead generation, an A/B test might focus on optimizing a landing page’s form length or CTA text.

- If the goal is to increase purchases, testing different product page layouts, pricing displays, or checkout flows could provide valuable insights.

- If improving content engagement is a priority, testing headline variations or article structures could reveal what keeps users reading.

Defining a goal upfront ensures that the test results contribute directly to business growth.

Step 2: Formulating a Hypothesis

A hypothesis serves as the foundation for any A/B test. It should be a clear, testable statement that predicts the expected outcome of a change. A well-structured hypothesis follows this format:

"If we change [element], we expect [outcome] because [reason]."

For example:

- Strong hypothesis: "If we change the CTA button color from blue to orange, we expect the conversion rate to increase because orange contrasts more with the background, making it more visible."

Weak hypothesis: "Changing the CTA button color might improve conversions." (This is too vague and lacks a clear rationale.)

A strong hypothesis helps define the specific variable being tested, the expected result, and the reasoning behind it. Without this clarity, interpreting test results becomes difficult.

Step 3: Determining your test audience and traffic split

Selecting the right audience and dividing traffic properly is crucial for obtaining reliable A/B test results.

Randomized Traffic Allocation: To avoid bias, traffic must be randomly split between the control (A) and variation (B). This ensures that both groups are exposed to similar external factors, making the comparison fair.

Full-Site vs. Segmented Tests

Full-site tests: Applied to all users (e.g., testing a new homepage design).

Segmented tests: Run on specific user groups (e.g., new visitors vs. returning customers, mobile vs. desktop users).

Segmented tests provide deeper insights but require a larger sample size to achieve statistical significance.

Avoiding Sample Pollution

External factors can distort test results. Common issues include:

Seasonality effects – Running an A/B test during Black Friday or the holiday season might not reflect normal user behavior.

Traffic spikes – An unexpected influx of visitors from a marketing campaign or a viral event could skew results.

Returning visitors seeing both variations – Users who see version A on one visit and version B on another may behave inconsistently.

To prevent sample pollution, use A/B testing tools that track users consistently and exclude bot traffic.

Step 4: Running the Test

Once the hypothesis is formed and the audience is determined, the test can be launched using an A/B testing tool.

Choosing an A/B Testing Tool

There are several A/B testing tools available, each catering to different needs:

- Google Optimize – A free option that integrates with Google Analytics (sunsetting in 2025).

- Fragmatic – A B2B web personalization tool with built-in A/B testing and AI-driven recommendations.

The choice of tool depends on budget, traffic volume, and the level of integration required.

Determining Test Duration

One of the most common mistakes in A/B testing is stopping a test too soon. To ensure reliable results, tests should run until they reach statistical significance (typically 95% confidence level). Key considerations:

- Sample size matters – Use a sample size calculator to estimate how many visitors are needed before starting the test.

- Short-term fluctuations can be misleading – Early results may not be reliable, so avoid making premature decisions.

- Consider the business cycle – If the site experiences weekly traffic patterns, the test should run for at least a full cycle.

A/B testing tools often provide statistical significance calculators to determine when a test has collected enough data.

Handling Mid-Test Anomalies

Unexpected factors can disrupt a test. Some common issues include:

Traffic spikes from external campaigns – If a test receives an unusual traffic surge due to an email blast or paid ad campaign, results may be skewed.

Bot traffic – Automated traffic can distort click-through rates and conversions. Filtering out bots ensures cleaner data.

External market shifts – If a competitor launches a major campaign during the test, user behavior might be affected.

When anomalies occur, extending the test duration or filtering out impacted data segments can help ensure more accurate insights.

What can you A/B Test? (high-impact elements to experiment with)

A/B testing is only as effective as the elements you choose to experiment with. Testing random changes without a strategy won’t drive meaningful results. Instead, focusing on high-impact elements—those directly influencing conversions—ensures you get actionable insights that lead to measurable improvements. Let’s break down some of the most valuable elements you can test.

Website Elements

Your website is often the first touchpoint with potential customers, making it a prime area for optimization. One of the easiest yet most impactful elements to test is your headlines and subheadings. A compelling, clear headline can immediately grab attention, while emotional triggers or keyword optimization can increase engagement. Similarly, your CTA (Call-to-Action) buttons—whether it’s the text, color, placement, or size—can dramatically impact click-through rates. A simple change from “Get Started” to “Start Your Free Trial” can improve clarity and intent. Forms are another crucial area to experiment with, as reducing unnecessary fields or switching to a multi-step process can significantly increase completion rates. Lastly, trust signals like customer testimonials, security badges, and case studies help build credibility—where they’re placed on the page can determine whether visitors feel confident enough to convert.

Landing Pages

Landing pages are designed for conversions, making them one of the most valuable areas to A/B test. The hero section, often the first thing visitors see, can make or break the engagement. Should you use a static image or a video background? A/B testing can provide a data-driven answer. Another powerful experiment is the placement of social proof—should testimonials be at the top of the page for instant credibility, or is it more effective when users scroll down? Testing different layouts can reveal what works best for your audience. Lastly, the navigation structure can impact user behavior. A sticky menu that follows the user might improve ease of navigation, but in some cases, it can also be distracting. Testing different navigation styles ensures visitors have the best experience possible.

Email Marketing

Email campaigns are a goldmine for A/B testing, as even small tweaks can lead to higher open rates and engagement. One of the simplest yet most effective tests is subject lines—does curiosity-based phrasing perform better than urgency-driven messaging? What about the use of emojis? The answer varies depending on the audience and industry. Personalization is another critical factor—testing whether emails that include a recipient’s first name or company name outperform generic versions can offer valuable insights into how your audience responds to tailored messaging. Finally, the send time of your email matters. Does your audience engage more with emails sent in the morning versus the evening? Do weekends outperform weekdays? A/B testing can help optimize your timing for maximum engagement.

Ad Campaigns

Digital ads thrive on constant testing, and the most successful advertisers never stop optimizing. Creative variations play a major role—should you use a static image, a carousel, or a video? Different formats appeal to different audiences, and testing helps determine what resonates best. Similarly, ad copy can make or break performance. Does a concise, direct message work better, or do longer, story-driven ads drive higher conversions? Audience targeting is another critical area for experimentation. Testing broad vs. niche segmentation allows you to refine your targeting strategy, ensuring you’re reaching the right people at the right time.

Steps to Build an A/B Testing Strategy for CRO Success

Implementing an effective A/B testing strategy is essential for optimizing conversion rates and reducing customer acquisition costs (CAC). However, to ensure reliable results that drive actionable insights, a structured and well-planned approach is required. Below is a step-by-step framework to develop a high-impact A/B testing strategy that maximizes conversions while minimizing risks.

Step 1: Establish a Testing Roadmap

A well-defined testing roadmap sets the foundation for consistent and goal-oriented experimentation.

- Prioritize Test Ideas with the ICE Framework: Use the Impact, Confidence, and Ease (ICE) framework to prioritize test hypotheses. Focus on high-impact areas that are easy to implement and have a strong likelihood of success.

- Create a Structured Testing Calendar: Plan tests methodically to avoid overlapping experiments that could interfere with results. Allocate sufficient time for each test to reach statistical significance before launching new variations.

Step 2: Create Variations That Align With User Behavior

Understanding user behavior is critical for designing variations that resonate with your audience.

- Leverage Heatmaps and Session Recordings: Use heatmaps and session recordings to identify friction points, track user behavior, and pinpoint underperforming areas that need improvement.

- Test One Change at a Time vs. Batch Testing: For high-impact changes, test one element at a time to isolate its impact. For complementary changes, consider batch testing to analyze how combined modifications affect conversion rates.

Step 3: Ensure Proper Experiment Setup

A poorly executed experiment can lead to misleading insights. Setting up tests correctly is crucial for obtaining reliable data.

- Define the Sample Size Using Statistical Calculators: Use A/B testing calculators to determine the ideal sample size, ensuring that your results achieve statistical power and accuracy.

- Run Pre-Test Quality Assurance (QA) Checks: Conduct rigorous QA to verify that variations render correctly across all devices, browsers, and user segments before launching the test.

Step 4: Monitor and Avoid Pitfalls During the Test

Real-time monitoring helps mitigate risks and ensures the integrity of your test results.

- Identify and Fix Flicker Effects: Minimize flicker effects caused by slow-loading personalization scripts that may skew results and disrupt user experience.

- Ensure Consistent Tracking Across Devices: Validate that data tracking remains consistent between desktop and mobile devices to prevent discrepancies.

- Avoid Peeking Bias: Resist the temptation to check results too early, as ending the test prematurely may lead to misleading conclusions.

Step 5: Decide on the Winning Variation and Scale It

Once the test concludes, accurately interpreting results is key to driving meaningful improvements.

- Interpret Lift Percentages and Statistical Significance: Evaluate the statistical significance of the winning variation and ensure that the observed lift is consistent across audience segments.

- Run Follow-Up Experiments for Further Optimization: Build on successful tests by iterating and running follow-up experiments to refine and optimize results.

- Document Key Learnings for Future Tests: Create a centralized knowledge base to document insights, successes, and failures to inform future testing initiatives and prevent repetition of mistakes.

Continuous experimentation is the backbone of a successful CRO strategy. A/B testing should not be treated as a one-off initiative but as an ongoing process to refine user experiences.

How to analyze A/B test results

Running an A/B test is only half the battle—the real value comes from how you interpret and apply the results. Many marketers make the mistake of either stopping tests too soon or misreading data, leading to flawed conclusions. Proper analysis ensures you’re making data-driven marketing decisions that genuinely improve performance. Let’s break down the critical steps to evaluating A/B test results effectively.

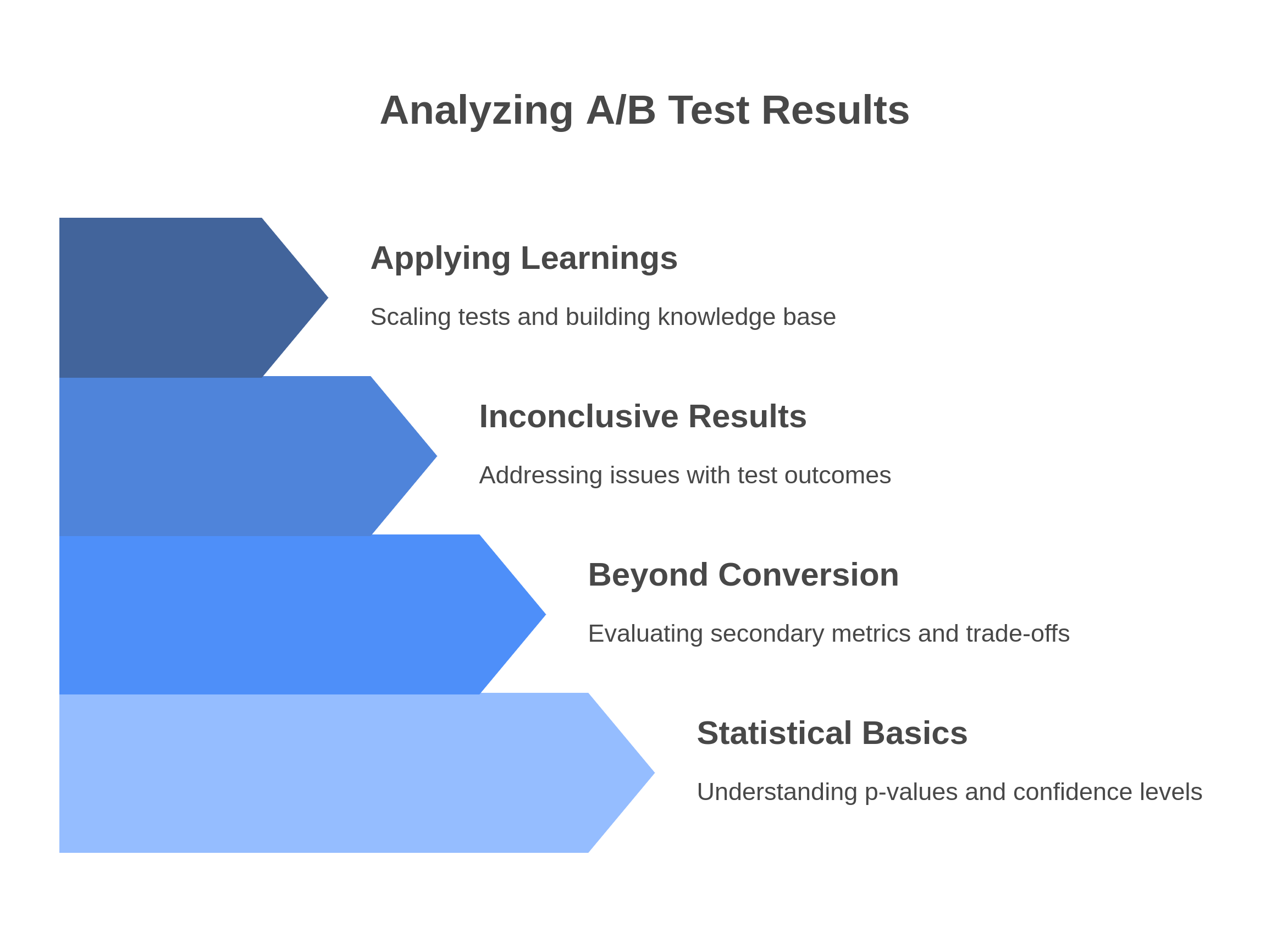

Step 1: Understanding Statistical Significance & Confidence Intervals

Not all test results are created equal. Just because one variation shows a higher conversion rate doesn’t mean it’s the right choice. Statistical significance helps you determine whether your result is due to an actual improvement or just randomness. The industry standard is a 95% confidence level, meaning there’s only a 5% chance that your result happened by accident.

To interpret results correctly, you need to understand p-values—a low p-value (≤ 0.05) means there’s strong evidence that the tested change caused the observed effect. However, relying solely on p-values can be misleading. Confidence intervals give you a range in which the true impact likely falls. If the confidence interval for your conversion rate includes 0, your test isn’t statistically significant, and you shouldn’t rush to implement the change.

Step 2: Looking Beyond the Primary Metric

Most A/B tests focus on a primary metric like conversion rate, but stopping there can lead to incomplete insights. A variation that improves sign-ups might reduce engagement, retention, or revenue per user—metrics that indicate long-term success. For example, a shorter checkout form might boost initial conversions but lead to a higher refund rate due to unqualified buyers.

Analyzing secondary metrics helps identify trade-offs. Did the winning variation attract more conversions at the expense of lower customer lifetime value? Did it improve engagement but increase bounce rates? A/B testing tools allow you to track multiple KPIs, ensuring that your optimizations lead to sustainable growth rather than short-term spikes.

Step 3: Handling Inconclusive or Negative Test Results

Not all A/B tests produce clear winners. Sometimes, your variations perform the same (inconclusive results) or worse than expected (negative results). That doesn’t mean the test was a failure—these outcomes still offer valuable insights.

If your test is inconclusive, check for data quality issues like tracking errors, sample bias, or uneven traffic distribution. Additionally, test duration and sample size play a crucial role—ending a test too soon might result in misleading data. If you’re confident in the accuracy of your test, the next step is to iterate with a new hypothesis. Maybe the tested change wasn’t bold enough, or the audience segment wasn’t the right fit. Instead of scrapping the test, refine your hypothesis and try again.

Step 4: Applying Learnings to Other Areas

A/B testing isn’t a one-off process—it’s about continuous optimization. If a variation significantly improves performance, consider scaling it beyond the tested page. Can you apply the same changes to different landing pages, email campaigns, or ad creatives? Successful tests can inform broader marketing strategies.

To maximize learnings, build an A/B testing knowledge base—a centralized document or dashboard where you track past tests, results, and insights. This prevents redundant experiments and helps new team members learn from previous successes (and failures). Over time, this repository becomes a valuable asset, enabling faster, smarter decision-making.

Conclusion

A/B testing is not just a one-time experiment—it’s a continuous optimization process that fuels long-term success. In 2025, as competition intensifies and customer behaviors shift rapidly, relying on gut instincts alone is a risky move. A well-structured A/B testing strategy enables businesses to make data-driven marketing decisions, refine user experiences, and optimize conversion rates with precision.

The key to effective A/B testing lies in methodical execution—setting clear goals, forming strong hypotheses, running statistically sound experiments, and analyzing results beyond just surface-level metrics. Even tests that produce inconclusive or negative results contribute to growth by revealing valuable insights about your audience and website performance. Instead of chasing quick wins, focus on building a culture of experimentation where every test, win or lose, refines your overall strategy.

To stay ahead in 2025, businesses must integrate A/B testing tools seamlessly into their marketing stack, embrace continuous learning, and scale successful experiments across multiple channels. By doing so, you’re not just improving individual elements—you’re systematically unlocking higher engagement, conversions, and revenue over time. The brands that treat A/B testing as a growth engine, rather than a side project, will dominate their markets in the years ahead.